Optimizing Web Performance with Edge Deployment 🚀

Distance is the ultimate bottleneck. Learn how CodeVelo uses Edge Deployment to push logic and assets closer to your users. By eliminating latency and improving TTFB, we ensure your site is fast, reliable, and scalable globally. The future of web performance is at the edge. 🚀

In the race for digital dominance, distance is the ultimate bottleneck. You can have the most optimized codebase in the world, but if your user in Singapore has to wait for a handshake from a server in Virginia, physics will always win.

At CodeVelo.dev, we don't accept latency as a cost of doing business. We utilize Edge Deployment to move your application’s logic and assets out of centralized data centers and into the "neighborhoods" of your global users.

Here is how deploying to the edge transforms your site from a standard web app into a high-performance global powerhouse.

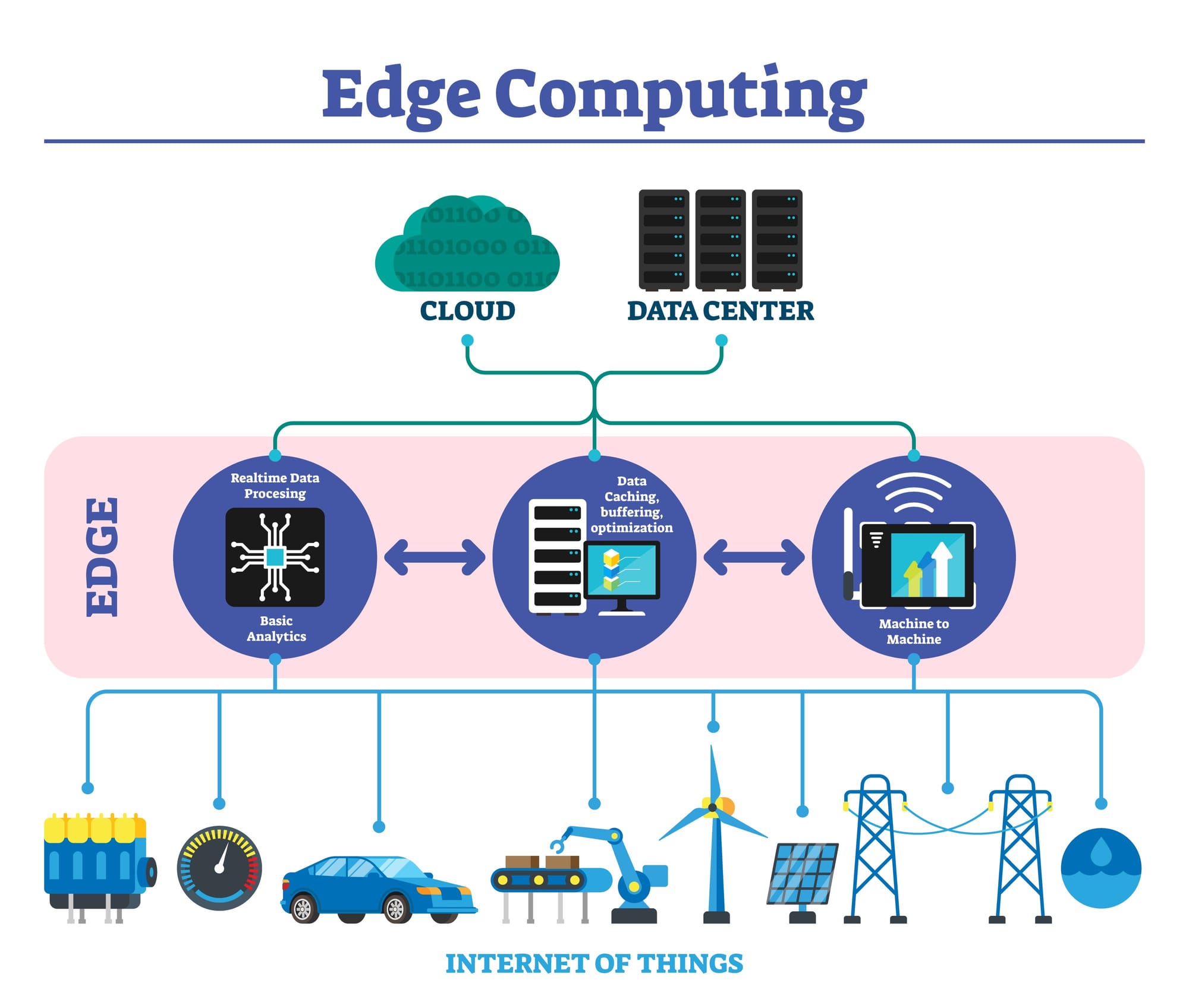

What is Edge Deployment?

Traditional hosting relies on a "Origin Server." When a user makes a request, it travels across the globe to that single source. Edge deployment flips this model by using a distributed network of servers (the "Edge").

When you deploy to the edge, your code lives on hundreds of nodes simultaneously. When a request is made, the node geographically closest to the user intercepts it, processes the logic, and serves the response. This effectively eliminates the "Round Trip Time" (RTT) that plagues traditional architectures.

The Triple Threat: Why the Edge Wins

1. Zero-Latency Static & Dynamic Content

Most people are familiar with CDNs for images or CSS. Edge Deployment takes this further by running Edge Functions. This means server-side logic—like authentication, A/B testing, or localized content—happens millimeters away from the user's device.

This approach is a massive boost to your Core Web Vitals, specifically Largest Contentful Paint (LCP). As we discussed in our post on Building Lightning-Fast Websites with Modern Web Stacks, choosing the right framework is only half the battle; how you deliver that framework's output is what seals the deal.

2. Smarter Scalability

Traditional servers can be overwhelmed by traffic spikes. Edge networks are designed to handle massive concurrency by distributing the load across a global mesh. If one node experiences a surge, the network automatically reroutes traffic to the next nearest healthy node. This ensures your site remains reliable even during viral moments or DDoS attacks.

3. SEO and Global Reach

Google’s ranking algorithms increasingly prioritize the Time to First Byte (TTFB). By leveraging edge deployment, you ensure your TTFB is consistently low across every continent. This global performance consistency is a core part of our Performance Optimization Every Sprint philosophy—optimizing for the user experience everywhere, not just in your local dev environment.

Implementing Edge Logic: Real-World Use Cases

How does this look in practice for a CodeVelo-optimized project?

- Geo-Personalization: Automatically redirecting users to their local language or currency at the edge, without a single flicker on the UI.

- Edge Middleware: Running security checks and authentication before the request ever reaches your main database.

- Image Optimization: Resizing and reformatting images on-the-fly at the edge node based on the user's device type.

The Verdict: The Future is Distributed

At CodeVelo, we believe that the central server model is becoming a legacy concept. For modern, scalable apps, the edge isn't just an add-on; it's the standard. By pushing logic closer to the user, we reduce latency, improve reliability, and provide the "instant-on" feel that defines world-class digital products.

Ready to move your stack to the edge? Explore our deployment workflows at CodeVelo.dev.